How Claude Code's New Agent Team Reviews Every PR

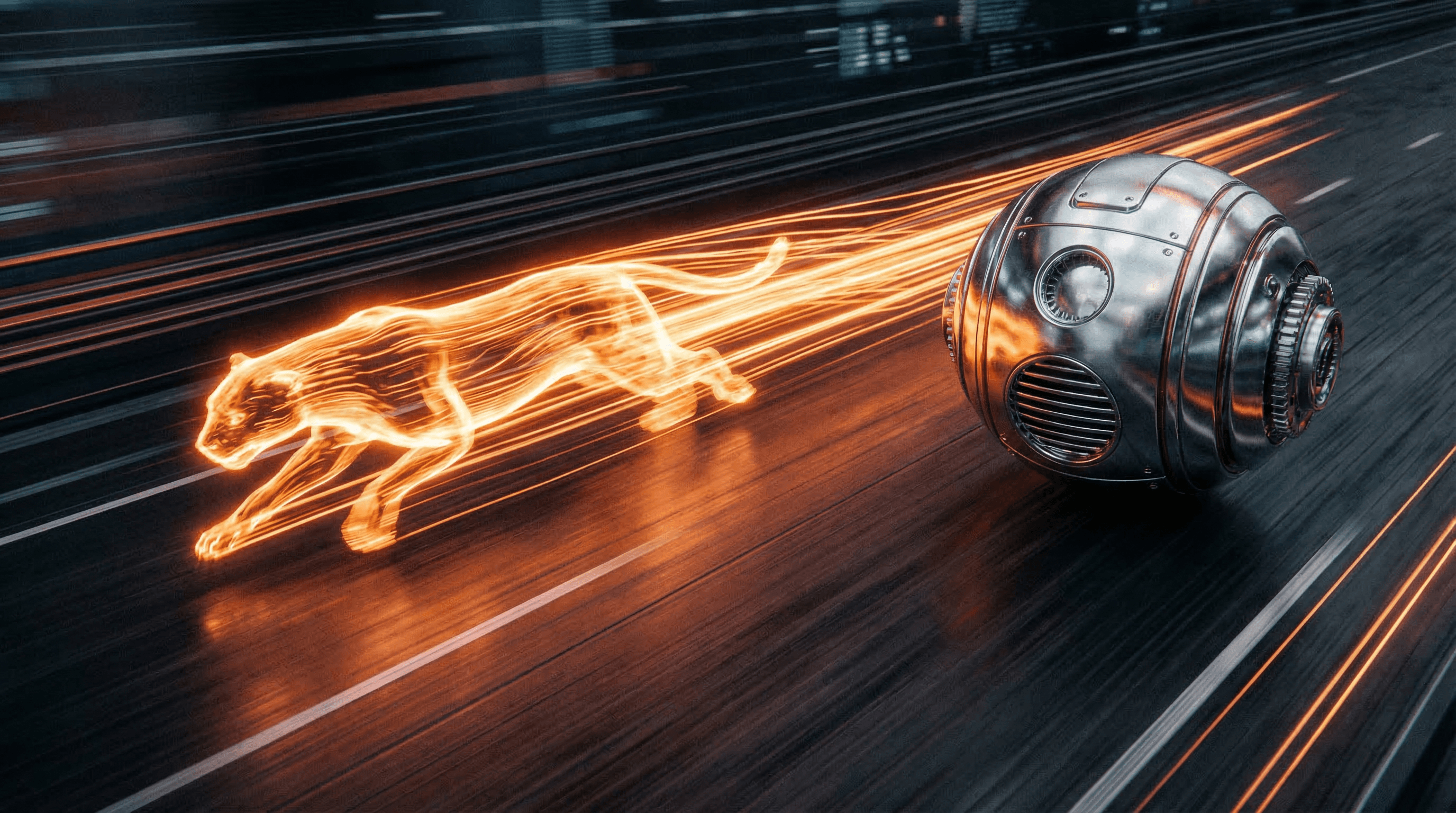

Claude Code now dispatches a team of AI agents to review every pull request. Here's how multi-agent code review works and why it matters.

Anthropic just shipped the most-liked developer tools announcement I've seen this year. 62,700 likes, 5,100 retweets. The feature: when a PR opens in your repo, Claude Code dispatches a team of agents to hunt for bugs. Not one agent reviewing the diff. A team. Multiple agents, each looking at different things, coordinating their findings.

I've been using Claude Code daily for months. This announcement changed how I think about what agents can do when they work together.

How does Claude Code's multi-agent code review work?#

The traditional code review flow hasn't changed much in a decade. Someone opens a PR. A reviewer (usually one person, sometimes two) reads the diff, leaves comments, maybe requests changes. The bottleneck is always the reviewer's time and attention. Humans are bad at reviewing 400-line diffs. We skim. We miss things. We focus on style and miss logic bugs.

Claude Code's new approach is different. When a PR opens, the system spins up multiple specialized agents. Based on what Anthropic has shown, each agent has a different focus area. One looks at security vulnerabilities. One checks for logic errors. One examines test coverage. One reviews API contract changes. They run in parallel, each analyzing the diff from their own angle, and then their findings get consolidated into a single review.

This is multi-agent orchestration applied to a problem every developer faces. And it works because code review is naturally decomposable. You can split "review this PR" into independent sub-tasks that don't need to coordinate during execution, only when presenting results.

OpenAI is doing something similar with their Agents SDK repositories. They use what they call "skills" to maintain repeatable workflows for verification, integration tests, release checks, and PR handoff. Different framing, same idea: break a complex workflow into specialized agent roles.

The timing here matters. Six months ago, the conversation around AI code review was "can an LLM read a diff and leave useful comments?" That bar has been cleared. The new question is "can a team of agents review code as thoroughly as a senior engineer?" I think we're about 80% there for most codebases.

What I've noticed using Claude Code for my own PRs is that the quality of review depends heavily on context. An agent that only sees the diff misses things. An agent that can also read the surrounding codebase, understand the project architecture, and check against previous patterns catches more. The multi-agent approach lets you give each agent a narrow focus with deep context for that focus, rather than asking one agent to be good at everything simultaneously.

There are limits. I've seen AI reviewers flag things that aren't actually problems, especially around patterns that are intentional but unconventional. And they still struggle with "this technically works but the approach is wrong" feedback, which requires understanding the broader system design in a way that's hard to get from code alone. But for catching null pointer risks, missing error handling, test gaps, and security issues? The agents are already better than most human reviewers. Humans get tired. Agents don't.

Why should you care?#

If you write code for a living, this changes your workflow in the next 6 months. Here's how I see it playing out.

Code review goes from bottleneck to instant. Right now, most teams have a 4-24 hour wait for review. That's not because the review takes that long. It's because the reviewer is doing other things and hasn't gotten to your PR yet. With agent-based review, you get initial feedback in minutes. The human reviewer still has a role, but they're reviewing the agent's review rather than starting from scratch. Faster iteration, fewer context switches.

Junior developers get a safety net. I remember being a junior dev and pushing code that I was 60% sure was correct, then waiting anxiously for the senior engineer's review. An agent team that catches common mistakes before the human reviewer even looks at it means fewer embarrassing "you forgot to handle the error case" comments. It levels the playing field.

The standard for "good enough to merge" goes up. When every PR gets a thorough multi-agent review, the baseline quality of merged code increases. Bugs that would've slipped through a tired Friday afternoon review get caught. Over months, this compounds into meaningfully more stable codebases.

For me personally, as a solo founder running RapidClaw, this is a force multiplier. I don't have a team of reviewers. I have me. Having an agent team review my PRs means I catch things I'd normally miss because I'm too close to the code. Last week an agent caught a race condition in my drip email cron job that I definitely would have shipped. That's a real bug affecting real users, caught by a machine.

What I'm doing about it#

I've already integrated Claude Code into my development workflow. Every PR I open on RapidClaw gets an automated review. But the multi-agent team feature takes it further than what I was doing before.

I'm also thinking about this pattern for RapidClaw itself. Our users build AI agents on the platform. The idea of giving those agents the ability to work as a team, not just as individual bots, is something I've been sketching out. Imagine setting up three agents: one monitors your competitors, one tracks industry news, one manages your content calendar. Today they run independently. What if they could coordinate? The competitor agent detects a pricing change, tells the content agent to draft a response post, and the news agent checks if any publications covered it. Same multi-agent pattern, different domain.

Who should pay attention#

Every engineering team that does code review, so basically everyone who ships software. Platform teams thinking about where to integrate AI into the developer experience. DevEx leads who are measuring time-to-merge and review throughput. And solo developers and small teams who can't afford the luxury of dedicated reviewers but still want quality gates on their code.

Frequently asked questions#

Does Claude Code's agent review replace human code reviewers?#

No, and I don't think it should. The agents catch mechanical issues: bugs, security gaps, missing tests, style violations. Human reviewers are still better at evaluating design decisions, questioning whether the feature should exist at all, and providing mentorship through review comments. The best setup is agents handling the first pass, humans handling the judgment calls.

How is multi-agent review different from a single AI code reviewer?#

A single agent reviewing a large diff has to juggle multiple concerns at once: security, correctness, performance, style. It's the same problem humans have. A team of specialized agents can each focus deeply on one area without the cognitive load of tracking everything. The combined output is more thorough than any single pass.

Can I set this up for my own repository today?#

If you're using Claude Code, the code review feature is available now. For other setups, you can build a similar workflow using the Anthropic API with multiple prompts targeting different review aspects. It takes some orchestration work, but the pattern is straightforward: split the review task, run agents in parallel, merge findings.

I'm building RapidClaw to make AI agents accessible to everyone. Try it free.

Ready to build your own AI agent?

Deploy a personal AI agent to Telegram or Discord in 60 seconds. From $19/mo.

Get StartedRelated Posts

Cursor Hit $2B in 4 Years. Claude Code Hit $2.5B in 10 Months. Game Over.

Cursor reached $2B ARR in 4 years. Claude Code did $2.5B in 10 months. We break down the numbers, compare features, and explain why the AI coding war is already decided.

Android Just Got Official AI Agent Tools — What Developers Need to Know

Google's Android team announced official tools for building AI agents on Android. The @AndroidDev post pulled 246 likes. Here's what the tools do, why they matter, and what comes next.

The Lightpanda Browser Is 11x Faster Than Chrome for AI Agents

A Zig-based headless browser just launched that's 11x faster than Chromium for AI agent automation. It got 8,248 likes on X. Here's why developers are excited.

Stay in the loop

New use cases, product updates, and guides. No spam.