AI Agents Are Running Payroll Now. The Governance Gap Is Terrifying.

ADP rolled out payroll agents to 40+ countries. 82% of CHROs are deploying by May 2026. Only 21% have governance models. The EU AI Act deadline is August 2.

In the span of three weeks in April 2026, the three largest HR technology companies on Earth all announced payroll agents. ADP launched its Autonomous Payroll Engine across 40+ countries. SAP SuccessFactors rolled out "PayAgent" to its enterprise customers. Workday pushed a general availability release of its payroll processing agent to all Workday HCM clients. Each announcement used variations of the same language: reduced manual intervention, faster cycle times, fewer errors.

Underneath that language is a simple fact. AI agents are now calculating, validating, approving, and in some cases executing payroll disbursements for millions of workers. Not recommending. Not drafting. Processing.

The speed of adoption has outrun every governance structure designed to contain it.

82% deploying, 21% governing#

The Gartner CHRO survey published April 8 found that 82% of Chief Human Resources Officers plan to have AI agents handling some portion of payroll processing by the end of May 2026. That is not a long-term roadmap. That is six weeks from now.

The same survey found that only 21% of those organizations have formal governance models for AI agents operating in financial processes. Not 21% of all companies. 21% of the companies actively deploying payroll agents.

The gap between those two numbers is where the risk lives.

Payroll is not a chatbot answering customer questions. A chatbot that gives a wrong answer creates a support ticket. A payroll agent that miscalculates overtime, misapplies a tax jurisdiction, or fails to account for a regulatory change creates a compliance violation, an employee lawsuit, or both. Multiply that error across 10,000 employees and you are looking at seven-figure liability before anyone notices the mistake.

The executives signing off on payroll agent deployments understand this in the abstract. What they have not internalized is that traditional payroll controls were designed for human processors making human mistakes at human speed. A human payroll clerk who misclassifies an employee in one state will likely catch the pattern within a few pay cycles. An agent that misclassifies employees will do it consistently, at scale, across every affected employee, every pay period, until someone builds a check that catches it.

The failure mode is not random errors. It is systematic errors applied uniformly.

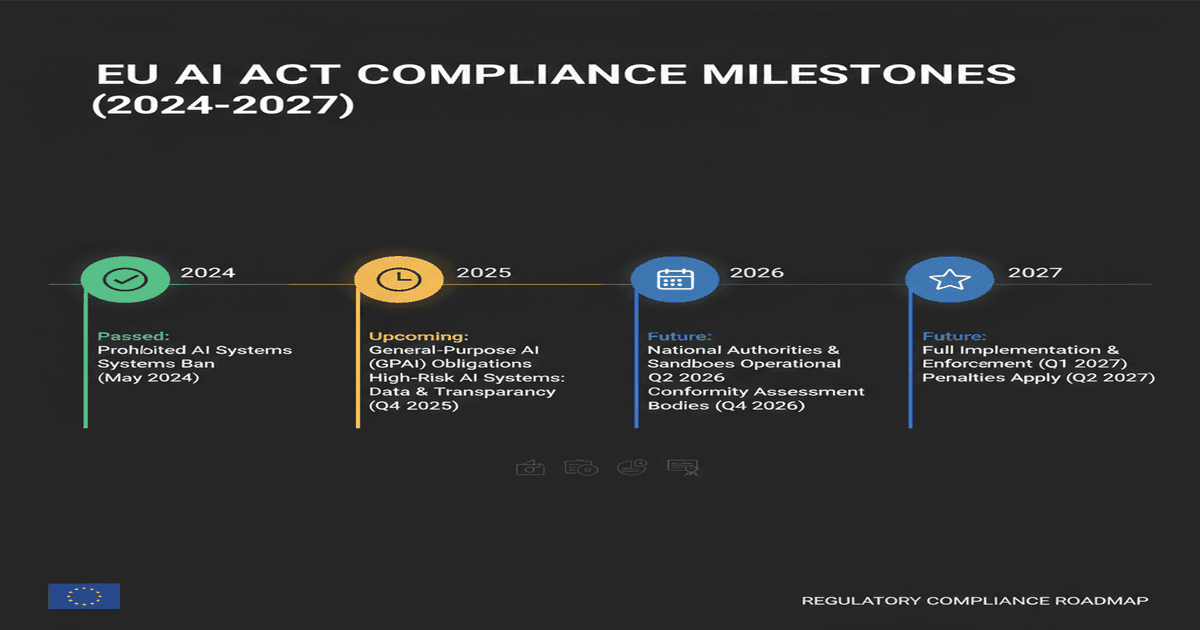

What "high risk" means after August 2#

The EU AI Act's second compliance deadline hits August 2, 2026. Under the Act's risk classification framework, AI systems that make decisions affecting workers' terms and conditions of employment are classified as "high risk." Payroll processing sits squarely in that category.

High-risk classification triggers a cascade of obligations: mandatory risk assessments, human oversight requirements, transparency documentation, data governance protocols, and ongoing monitoring with audit trails. Organizations deploying payroll agents in EU jurisdictions after August 2 without meeting these requirements face fines of up to 3% of global annual turnover or 15 million euros, whichever is larger.

Here is the problem. The 82% of CHROs deploying payroll agents are doing so on timelines that end in May. The EU compliance deadline is August. That leaves roughly 90 days for organizations to retrofit governance frameworks onto systems that are already live and processing real money for real employees.

Retrofitting governance onto a running system is categorically harder than building it in from the start. Ask anyone who has tried to add audit logging to a production database after the fact. Now imagine that exercise applied to a system that touches every employee's bank account.

The NIST AI agent standards initiative published its draft framework in March, and it explicitly calls out financial processing agents as requiring the highest tier of identity verification and action authorization. But NIST frameworks are voluntary. The EU AI Act is not.

The anatomy of a payroll agent failure#

To understand why governance matters, consider what a payroll agent actually does during a processing cycle.

It ingests time and attendance data from workforce management systems. It applies pay rules: base rates, overtime thresholds, shift differentials, union contract provisions, commission structures. It calculates statutory deductions: federal income tax, state income tax, FICA, state disability insurance, local taxes. It processes voluntary deductions: health insurance premiums, 401(k) contributions, HSA deposits, garnishments. It generates ACH files or wire instructions for disbursement. In some configurations, it initiates those payments.

Each step involves decisions. When an employee works in two states during a pay period, the agent must determine which state's rules apply to which hours. When a garnishment order arrives mid-cycle, the agent must calculate the correct withholding amount, respect the priority of competing garnishments, and ensure the employee's take-home pay doesn't drop below the federal minimum. When tax tables change — which happens constantly at the state and local level — the agent must identify the change, validate it, and apply it starting on the correct effective date.

A human payroll team handles these decisions with a combination of explicit rules, institutional knowledge, and judgment calls escalated to supervisors. The agent handles them with whatever logic was encoded into its training and configuration. If that logic is wrong, or incomplete, or based on outdated regulatory data, the agent will execute confidently and incorrectly.

ADP's announcement mentioned that its payroll agent processes across "40+ countries." Each country has its own labor law, tax code, social insurance framework, and reporting requirements. Some countries change these rules quarterly. Germany's payroll regulations alone fill volumes that take specialized accountants years to master.

The question is not whether an AI agent can handle this complexity. The demos are impressive. The question is what happens when it gets something wrong, who detects it, how fast, and who is liable.

The liability vacuum#

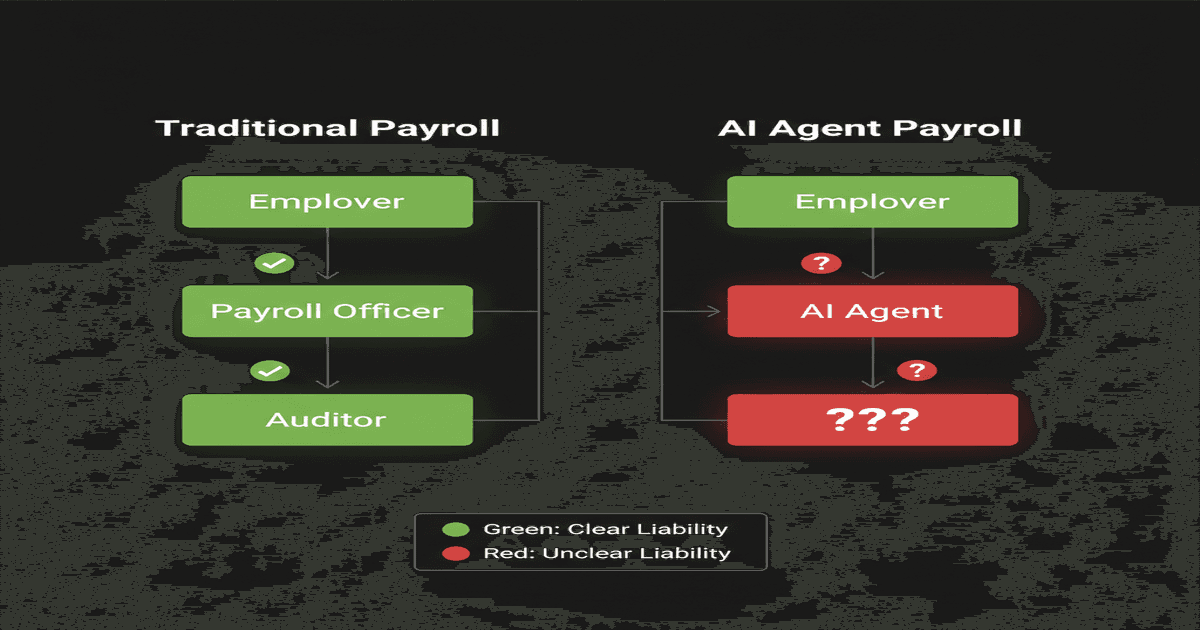

This is where the governance gap becomes a legal crisis. Traditional payroll processing has clear liability chains. The employer is responsible for accurate wage payments. If they outsource to a payroll provider like ADP, the contract specifies liability allocation. If a human processor makes an error, the error is attributable to an individual or a process, and the remediation path is established.

With agent-based processing, the liability question fractures. Did the error originate in the agent's training data? In its configuration? In the underlying model? In the data feed from the time and attendance system? In a regulatory update that the agent's knowledge base didn't incorporate in time? Each of these points to a different responsible party, and most deployment contracts haven't been updated to address agent-specific failure modes.

The first AI agent payments processed through Mastercard and Santander established that financial institutions are willing to let agents initiate transactions. But willing and prepared are different things. The regulatory infrastructure for agent-initiated financial transactions is still being built, in real time, while the agents are already running.

Some forward-thinking organizations are implementing what Deloitte calls "agent audit layers" — middleware that sits between the payroll agent and the disbursement system, validating every calculation against independent rule engines before allowing execution. This is the right idea. It is also expensive, complex, and being adopted by a fraction of the companies deploying agents.

What governance actually looks like#

The 21% of organizations that do have governance models share some common patterns worth examining.

Dual-execution validation. The agent processes payroll independently. A separate system — sometimes a second agent with different training, sometimes a traditional rules engine — processes the same payroll independently. Discrepancies above a threshold trigger human review. This is computationally expensive but catches systematic errors that single-agent architectures miss.

Regulatory change management. A dedicated process for ingesting, validating, and applying regulatory changes to agent configurations. Not just updating a data feed, but testing the agent's behavior against known edge cases after every regulatory change. This requires maintaining a library of test scenarios that grows with every jurisdiction.

Escalation protocols with SLAs. Clear rules for what the agent can approve autonomously and what requires human sign-off. These are not static — they tighten during regulatory transition periods, after system updates, and when processing payroll for newly acquired entities.

Retroactive audit capability. The ability to reconstruct, for any pay period, exactly what data the agent ingested, what rules it applied, what decisions it made, and what output it produced. This goes beyond logging. It requires the agent's reasoning chain to be inspectable, which not all current payroll agent architectures support.

Employee recourse mechanisms. A clear, documented process for employees to challenge agent-calculated pay. This sounds basic, but many organizations deploying payroll agents have not updated their employee handbooks or HR processes to account for the fact that a machine, not a person, calculated their paycheck.

The speed problem#

The deeper issue is temporal. Payroll runs on fixed cycles. Biweekly. Semi-monthly. Monthly. Each cycle has a processing window, a validation window, and a disbursement deadline. Miss the deadline and employees don't get paid on time, which in many jurisdictions triggers automatic penalties.

Agent-based payroll processing is being adopted specifically because it compresses the processing window. What took a payroll team three days can be done by an agent in hours. Organizations are using that speed to reduce headcount, cut costs, and process closer to the disbursement deadline.

But compressed timelines also compress the window for catching errors. If the agent processes in four hours and disbursement happens two hours later, there are two hours to detect and correct a systematic error affecting thousands of employees. In a traditional three-day cycle, there were two days.

Speed without governance is not efficiency. It is faster failure.

Can AI agents run payroll?#

Yes, and they already are. ADP, SAP, and Workday have all deployed production payroll agents in 2026. The technology works for the majority of standard payroll scenarios — calculating regular pay, applying common deductions, generating tax filings, and initiating payments. The question is not capability but oversight. Organizations deploying payroll agents without governance frameworks, audit layers, and clear escalation protocols are taking on significant regulatory and financial risk. The 21% governance adoption rate suggests most companies are deploying first and governing later, which inverts the prudent order of operations.

Are AI payroll agents safe?#

They are as safe as the governance wrapped around them. An agent with dual-execution validation, regulatory change management, and retroactive audit capabilities can be safer than a human payroll team, because it eliminates the inconsistency and fatigue-related errors that plague manual processing. An agent deployed without these controls, processing payroll across multiple jurisdictions with no independent validation layer, is a compliance incident waiting for a trigger. The EU AI Act's classification of payroll agents as high-risk systems reflects this reality — safety is not an inherent property of the technology but of the deployment architecture.

The individual parallel#

There is an irony in the enterprise payroll agent story. Large organizations are spending millions to deploy agents that manage employee compensation at scale, while the individuals receiving those paychecks have almost no agent-based tools for managing their own financial lives.

The same architecture that lets ADP process payroll for 40 million workers — data ingestion, rule application, validation, action — applies at the individual level. An agent that tracks your income across sources, monitors your tax withholding, flags discrepancies in your pay stubs, and organizes your financial data is doing a simpler version of what enterprise payroll agents do.

The difference is access. Enterprise payroll agents cost six or seven figures to deploy. Personal financial agents should cost a fraction of that. The underlying pattern — an AI agent that monitors, processes, and acts on financial data according to rules you define — scales down just as well as it scales up.

If your employer is trusting an agent with your paycheck, it is worth asking whether you should have an agent watching the other end of that transaction. Not to replace your judgment, but to augment it. To catch the errors that the enterprise agent might introduce. To track the deductions, verify the math, and flag when something changes that shouldn't have.

The governance gap at the enterprise level is a systemic problem that regulators will eventually address. The governance gap at the individual level — the lack of tools for workers to verify and manage their own financial data — is one you can close yourself, starting today.

Your employer's AI handles your paycheck. Shouldn't your AI double-check it? RapidClaw deploys your personal agent in minutes.

Ready to build your own AI agent?

Deploy a personal AI agent to Telegram or Discord in 60 seconds. From $19/mo.

Get StartedRelated Posts

Shadow AI Agents Are Running in 98% of Companies. Nobody Knows What They're Doing.

98% of organizations have unauthorized AI agents operating inside their networks, according to new research. Shadow AI agents access sensitive data, make decisions, and take actions without IT oversight. Here's why this is the biggest security blind spot of 2026.

42% of Companies Abandoned Their AI Agent Projects Last Year. They All Made the Same 3 Mistakes.

AI agent project abandonment jumped from 17% to 42% in one year. The pattern is identical: over-scoping, no feedback loop, and treating agents like software instead of employees.

Adobe Killed Experience Cloud and Replaced It With AI Coworkers

Adobe Summit 2026: Experience Cloud is dead. CX Enterprise replaces it with persistent AI 'Coworkers' that learn, remember, and act autonomously across the marketing stack.

Stay in the loop

New use cases, product updates, and guides. No spam.